Exploring the world within and bring clarity to your data

Start a moving journey to take control over your data management while keeping an eye on its social and ecological impact.

Nestled in the bask mountains, I keep a deep relationship with nature and the ocean. My journey as a scientist is shaped by my focus on both technique and biology.

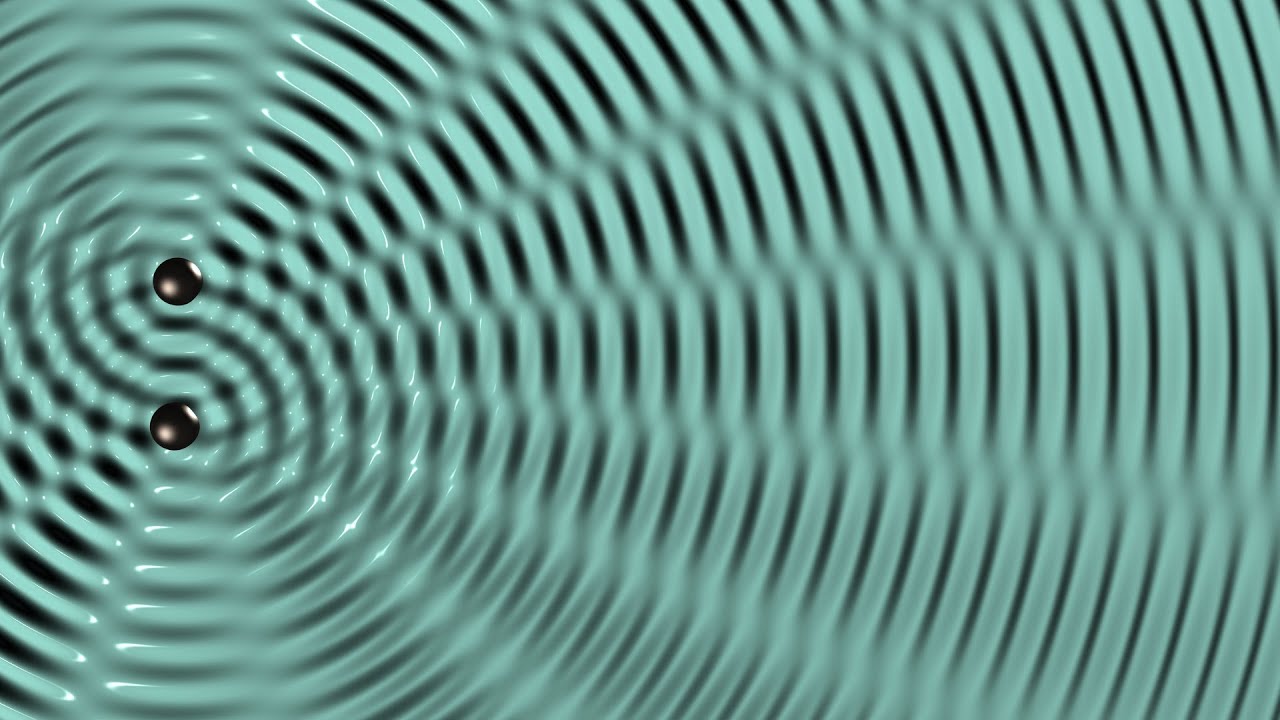

Explore Networks

Everything is interconnected. Your data are the reflection of your organisation.

Enjoy Nature

Nature is an infinite source of inspiration and wisdom. In my journey as a scientist, Nature has always kept a growing place and helped align work with my values at the intersection of technique, biology and philosophy…

-

Sleep as Rebellion: The Colonization of Time Itself

Article 2 of 4: From Waking Hours to Unconsciousness Read time:5 minutes Core idea: Your attention is the scarce resource capitalism is destroying. Sleep is the last space where you’re free—and it’s under assault. Long story short – We’ve timed everything: work, leisure, even meditation. Your brain can’t process at this speed. – Capitalism requires […] -

The Calculator Problem: From Tools to Disempowerment

Article 1 of 4: Culture, Knowledge, and Illich’s Threshold This is the first in a series about institutional violence and the blueprints we’re handed for how to live. I’ll start with technology, move to time, then to the body itself, before revealing the frame connecting them all. My method is philosophical but grounded. As a […]